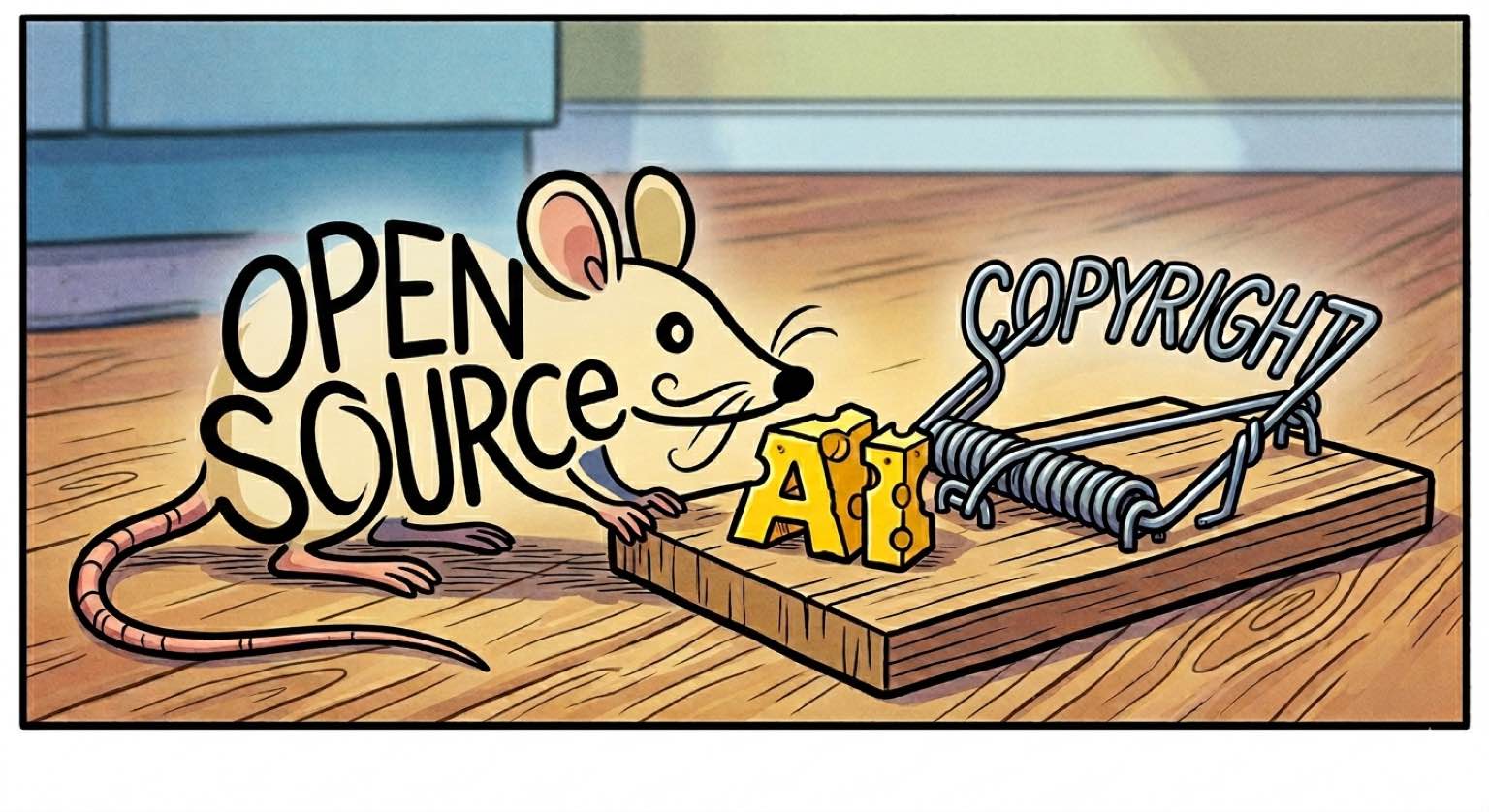

AI vs. Open Source, Part 1: The Empty Grant

The step function increase in AI’s ability to generate code is looming over open source. What frontier models can do today is a warning shot, already enough to dissolve the legal scaffolding that makes open source enforceable. Historically, companies with flagship open-source software have relied on relicensing as a weapon to protect their competitive advantage. MongoDB moved from AGPL to SSPL in 2018, CockroachDB went from Apache 2.0 to BSL in 2019 to a custom CockroachDB license in 2024, Elasticsearch followed in 2021, HashiCorp switched Terraform and Vault to BSL in 2023, Sentry created an entirely new license (FSL) that same year, and Redis went source-available in 2024, mostly in response to cloud vendors offering their code as managed services. That weapon is now obsolete as AI threatens to make licenses completely irrelevant.

AI generated code? No copyright for you!

Every open source license is a conditional grant of copyright. The author holds the copyright, and the license grants permission to use the work only if certain conditions (e.g., attribution, source disclosure, or reciprocal licensing) are satisfied. This is the only enforcement mechanism that sustains open source through the chain of derived works. Without it the entire structure collapses.

AI-generated code is not copyrightable. The D.C. Circuit held in Thaler v. Perlmutter that the Copyright Act requires a human author. The U.S. Copyright Office confirmed that providing prompts to an AI does not constitute sufficient human authorship. This is U.S. law; other jurisdictions differ, but the enforcement gap is universal. The copyright status of “AI-assisted” code is still a legal gray area. While code written with “AI assistance” is copyrightable, the line between AI-generated and merely AI-assisted remains undefined. Is it sufficient to change a comment in AI-generated code to make it AI-assisted? No court has drawn that line.

AI-generated code is already at the gate. Open source maintainers are drowning in “vibe coded” pull requests: AI-generated submissions with minimal human oversight. Gentoo has banned AI-generated code contributions outright. NetBSD classifies them as tainted code requiring core developer approval. The Linux kernel allows them but mandates disclosure and full human accountability. Quality is the basis for rejection today. That filter has a shelf life. As the models improve, the quality will improve. The ethical case for rejecting machine-generated contributions becomes harder to make when the code is indistinguishable from human work.

Code without copyright cannot be licensed. The requirement to share source becomes unenforceable for modifications that have no copyright. Such code falls into a legal void: not public domain (no affirmative dedication), not proprietary (no copyright to assert), not open source (no license that can attach). The license text still sits in the repository. It is an empty grant.

To free, or not to free

Consider any corporation that writes and maintains code under an open source license. If AI-generated code enters that repository, the license grant over those contributions is void. The codebase becomes unauditable. Some files are copyrighted and licensed, others legally unowned, and yet others become legally contestable “AI-assisted” code.

Every team using Copilot or Claude Code produces ambiguously authored output. The corporation is strongly incentivized to close the source rather than maintain an open codebase with no legal protection. The relicensing wave already demonstrated this pattern: when the legal basis for openness stops serving the business, the business closes the code. AI-generated code is a larger threat than cloud vendors ever were. Cloud vendors merely underpriced them. AI dissolves the legal mechanism that made their licenses mean anything.

Why reciprocate when you can replicate?

Even if all the lawyers in the world agreed on the copyright question, a second problem remains: AI’s ability to clone functionality with new source code.

Clean-room reimplementation has precedent. Sega v. Accolade established that reverse engineering for interoperability is fair use. Yet there was no widespread reimplementation of open source software into closed source counterparts. The economics did not make sense. Rewriting a mature project from scratch took months of expert labor, regardless of what license it carried. Compliance was cheaper than reimplementation. Until now.

With AI, the cost of generating code has gone down to near zero. Dan Blanchard rewrote the Python chardet library with Claude Code in order to sidestep LGPL. A project that would have taken a team months was completed in days. chardet is a proof of concept, not the end state. Software is modular, and that modularity compounds: as individual components are cloned, they become building blocks for cloning progressively larger and more complex systems. This is not a today problem. It is a next-year problem. MALUS.sh took the concept further, launching as a satirical “clean room as a service.” Feed it any open source project. It produces a functionally equivalent clone stripped of all license obligations. No attribution. No copyleft. The satire landed because the tool works.

Granted, that is still legally fraught because the AI model was trained on open source software, and traditional clean-room doctrine required that the reimplementing team had no access to the original source. Whether the model’s transformation of training data into weights constitutes a sufficient “clean room wall” is novel law. No court has ruled.

Regardless, enforcement at scale is near impossible. You cannot pursue every clone. You cannot detect every AI-generated clone. The economic bulwark of expensive code writing is gone irrespective of the legal outcome. And the cost will only continue to drop. What frontier models clone imperfectly today, the next generation will clone competently. The question is whether actions will follow incentives.

What remains

Open source has survived every prior threat by adapting its licensing regime. Tivoization got GPLv3. Cloud free-riding got SSPL and BSL. Importantly, the legal machinery worked, because copyright was relevant and valuable. AI is different. The machinery itself is failing. The grant is empty and the moat is collapsing. The onslaught of automated discovery and generation is incentivizing institutions to close their source code.

That would be survivable if the community that built open source could regroup and adapt as it always has. Part 2 examines why that is no longer a safe assumption.

Linked in this post

AI Collapses the Economic Moat of Clean-Room Reimplementation

The copyleft moat was never purely legal. It was economic: compliance was cheaper than reimplementation. AI collapsed that cost.

Copyright Is the Sole Enforcement Mechanism for Open Source

Every open source license is a conditional grant of copyright, and copyright is the only enforcement mechanism that sustains open source.

The AI-Assisted vs. AI-Generated Boundary Is Legally Undefined

The Copyright Office says AI assistance does not bar copyrightability but has not defined where AI-assisted ends and AI-generated begins.

The Empty Grant: AI-Generated Code Creates Unenforceable Licenses

AI-generated code is not copyrightable, which means it cannot carry license conditions.