The grand flattening: AI Slop is just the next step

•

10 October 2025

•

9 mins

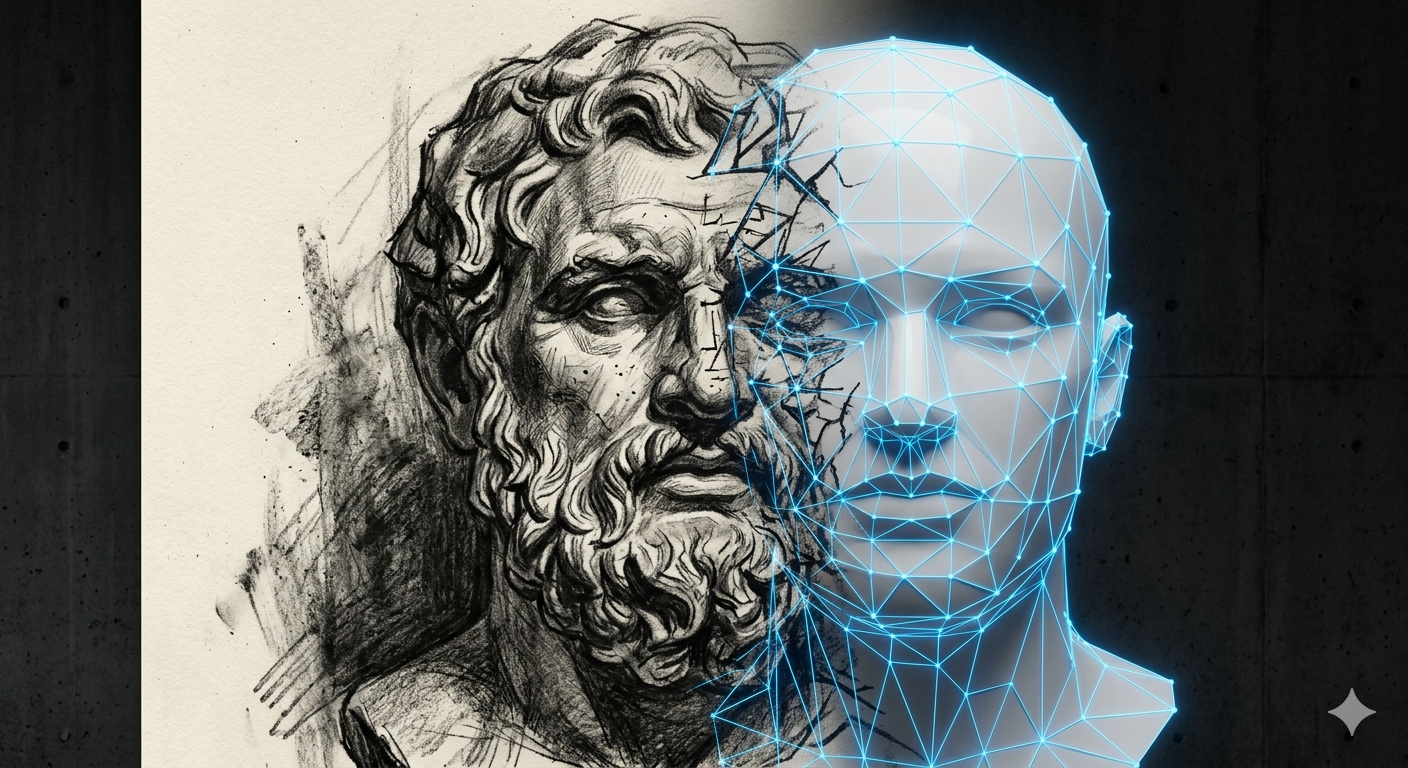

Reality is an entity of vast, irreducible complexity. It is far more than the human mind can grasp, yet we are forced to operate within it. To cope, we rely on simplified models and simulations; essentially, shorthand versions of the world that fit inside our heads. The problem is fidelity. Eventually, the model breaks, and we are forced to confront phenomena we didn’t account for and don’t know how to handle.

Humanity’s response to this problem has not been improvement alone. Each advance in our models brought with it a particular hubris: the conviction that this time, the map was complete; that what couldn’t be captured was simply not worth capturing. And it is precisely that conviction that licenses the coercion. If the model is complete, then deviation isn’t a sign of the model’s limits. It is a sign of reality’s defects.

Better maps didn’t reduce the impulse to redraw the territory. They justified it.

To eliminate these ‘edge cases,’ humanity has spent millennia on a grand project: forcing reality to conform to a model we can predict and control. Philosophers have both fueled this project and warned of its side effects, warnings we have summarily ignored. The culmination of this effort is the ‘AI Slop’ currently inundating us. An ironic final step in subverting our perception of reality itself.

The legibility project of the pre-modern era

Humans tend to be “illegible”. They are complicated, and diverse. Every group has its own customs, traditions and morality. Controlling and ruling over such an illegible group is near impossible. So this grand project started millennia ago as a mechanism to control people by reducing their illegibility. By making them legible. By flattening their complexity and diversity into a ‘compressible’ set of behaviors. The first recorded efforts in this direction are the Code of Hammurabi and the Manusmṛti.

Yes, these are known to be the first legal texts, but then again, a legal system essentially is a compression algorithm for human behavior; its goal is to reduce a diverse population to a predictable, manageable set of outputs. Of course, there were errors in predictions of these models, and such errors are referred to as “crimes” and there are entire institutions dedicated to “correcting” such errors, by not improving the model, but by coercing human behaviors to fit the model. “Justice” was really about systemic control.

Of course, due to limitations of technology, the model had incredibly low resolution, and it sought to model only the human behaviors that needed control within the confines of the day’s political sovereignty. For the most part, these models left the natural world and our inner worlds alone.

As the project matured, the philosophers were on a mission to build a descriptive model of reality. Plato’s Theory of Forms modeled the world’s diversity as mere ‘noise’ deviating from a perfect ideal. Aristotle provided the methodology for deconstructing reality into ‘silos of legibility’ under the fatal assumption that nothing of value is lost in the gaps. Mathematicians such as Aryabhata and Brahmagupta created descriptive maps to navigate the heavens. However, the impulse toward a prescriptive reality was already visible in the shadows. It lived in Astrology, which forced human destiny to fit a celestial map, and in the sale of Indulgences, which downsampled the infinite complexity of sin into a quantifiable financial transaction. The pivot to a world coerced to conform to the map was not a new idea, but it could not be realized at scale until better technology came along.

The objectivity of modernity

The renaissance and modernity introduced us to the concept of objectivity: the notion that things are true regardless of a subject. The philosophers of this age viewed reality as an object to be observed, dissected, studied. And somehow, we could do it objectively, as if we weren’t part of this object we were studying. This paradigm alienated us from our own existence. This alienation allowed philosophers to turn this gaze of objectivity inward into our own lives and how we relate to each other; into our inter-subjectivity. They dissected how we relate to each other, and how we work together to produce goods and make progress. This was categorized and studied with ever more precision. We had new categories to peer into. There was economics and there was psychology and there was political science and there was ethics. Almost as if each of them had nothing to do with each other, and pursued their own investigations to get to their objective truths.

It wasn’t long before this inward gaze of economics turned onto human work, and it didn’t see people. It saw functions. A watchmaker, viewed through the economic lens, was not a person embedded in a tradition, a community, a set of relationships. He was a bundle of discrete, separable processes: material procurement, part fabrication, assembly, quality control, distribution. The model couldn’t perceive anything it couldn’t categorize. And what it couldn’t perceive, it treated as if it didn’t exist.

This was the Industrial Age: a period of selective blindness. The watchmaker didn’t disappear because someone chose to erase him. He disappeared because the model looking at him had no category for what he actually was. We didn’t just make more watches; we created a world where the human was only allowed to exist as a low-resolution component of a larger machine.

Ontological Blinders of the information age

The 20th century provided the necessary technologies to unify the balkanized silos of modernity. Through the work of Alan Turing, John von Neumann, and Claude Shannon, the messy kinetics of physical reality were recast as pure information processing. “Process Efficiency” was replaced by “Algorithmic Optimization.”

We resurrected Plato’s theory of Forms, but the forms were now idealized mathematical models. Any deviation from the model became a systematic ‘error’ that needed rectification. For instance, the nuances and peculiarities around the problems of routing trains between cities, laying down water and sewer pipes in a neighborhood, and moving data packets around a network were all ‘unified’ by the same optimization algorithms, and in that process those very same nuances and peculiarities were completely marginalized. A missed package was no longer a logistical accident: it was an “error” requiring more “fault tolerance.” A worker calling in sick was no longer a human event: it was a “node failure” requiring a “redundancy” patch. These changes happened in the background of our lives, hidden by the perceived convenience of the tools.

The insidious turn occurred when the model overrode the reality. The diversity of the world was rebranded as “noise” that failed to map to the model, rather than the model failing to map to the world. Algorithms started changing human behavior so that it remains compliant with the model’s expectations.

The upshot is a society that has mistaken the models for reality. The mask has become the face. You see it in social media where a curated “Instagram life” is accepted as a true representation of existence. You see it in the economy, where macro-economic abstractions like GDP are deemed more “real” than actual economic health. We have now flattened ourselves to be legible to these models. We optimize our lives to improve a credit score as if the score were the reality. “Pics or it didn’t happen” is the demand for algorithmic validation of our own subjectivity. We have become ontologically blind to anything that cannot be accounted for by the model.

The Manufactured Reality of the Intelligence Age

The 21st century introduced the ultimate agent of the Grand Project: Generative AI. This technology finally detaches the Map from the Territory. But it does so in a way that is categorically different from everything that came before it.

Previous technologies mediated reality. The photograph selected a frame. Television broadcast a produced version of events. Social algorithms surfaced a curated slice of human expression. In each case, the underlying reality was still there, generating the inputs. The mask had become the face; but there was still a face underneath.

Generative AI breaks this relationship entirely. It does not compress reality. It bypasses it. The inputs to a large language model are not live signals from the world; they are prior compressions: text, images, and records of what humans said and made, after already passing through every filter described above. The model trains on the averaged residue of a civilization that had already been flattening itself for centuries. It then generates new outputs optimized for coherence with that averaged signal; maximally legible, frictionlessly consumable, scrubbed clean of the noise that makes any particular perspective distinct from the statistical mean.

We call the result “AI Slop.” It is a pejorative that describes the soulless, uncanny nature of these creations. Yet, we cannot stop consuming it. We are addicted to it because it is the path of least resistance. It is content with the highest possible fidelity to the model and the lowest possible fidelity to any individual reality. It has no author, no context, no stake. It is the signal of the average; which is to say, the signal of no one.

We consume it anyway, and at scale. Not because we are foolish, but because this entire arc has been progressively reducing our tolerance for friction, for illegibility, for the effort that genuine encounter with reality requires. AI Slop is not a cause. It is a symptom of a sensory system that has been recalibrated, over centuries, to mistake the model for the thing.

The momentum driving this is 2,500 years in the making. From Hammurabi’s codes to Shannon’s information theory, every step has iteratively eliminated the human element as an “inefficiency.” We are now so far immersed in this episteme that we have lost the ability to distinguish the mask from the face. Previously, the mask became the face. Now there are no more faces. Only masks.

Like it? Share it!